Let’s get straight into it. This week’s issue looks at what separates AI experiments from real business value. In Field Notes, we break down how SAP turned a costly support problem into a measurable impact with a focused AI use case. In Project Playground, we dig into practical tips for building agentic AI — how to evaluate, trace, and iterate on workflows with the same rigor product marketers apply to GTM strategy. Let’s go!

🚨Signal or Noise? — This Week in AI x Marketing

🔍 Field Notes: From AI Pilots to Proof of Value

🧪 Project Playground: Practical Tops for Building Agentic AI

📣 AI Word of the Week

🚨Signal or Noise? — This Week in AI x Marketing

Summary: Only around 10.5% of large B2B marketers and 7.4% of smaller firms are using AI for market‑mix modelling, while just 11.7% of large businesses and 25.3% of smaller ones are using it for segmentation and targeting.

Why it matters: If your team lacks the skills to use AI effectively, even tools with potential won’t drive value — so you’ll want to budget time for capability‑building, not just buying tools.

Summary: A new survey finds that 86% of Gen Z professionals in B2B use AI daily, and a full 95% of all respondents say they use AI at least weekly.

Why it matters: As a newsletter founder and early‑career marketer, this signals the buyer base is shifting — your content, tools and messaging need to align with a more AI‑savvy audience.

Summary: With AI‑powered search expected to drive ~20 % of B2B organic traffic, companies must adopt structured data and schema markup to remain visible in the “answer engine” era.

Why it matters: If your B2B brand depends on organic traffic or content marketing, the SEO rules are changing — you’ll want to review your website’s structure and content so AI‑driven discovery doesn’t bypass you.

🔍 Field Notes: From AI Pilots to Proof of Value

Recent studies paint a clear picture of the AI reality gap. MIT reports that 95% of generative AI pilots fail to reach production, RAND found that 46% of companies scrap projects before launch, and Boston Consulting Group says only 24% have the capabilities to turn AI experiments into business value. In short — most organizations are spending on AI, but few are seeing returns.

One exception: SAP’s journey with Coveo, recently profiled in Forbes by Maribel Lopez (source).

Facing costly customer support demands across 300M+ cloud users, SAP didn’t start with “an AI project.” Instead, they started with a measurable business problem — unnecessary support tickets — and worked backward to find where AI could help.

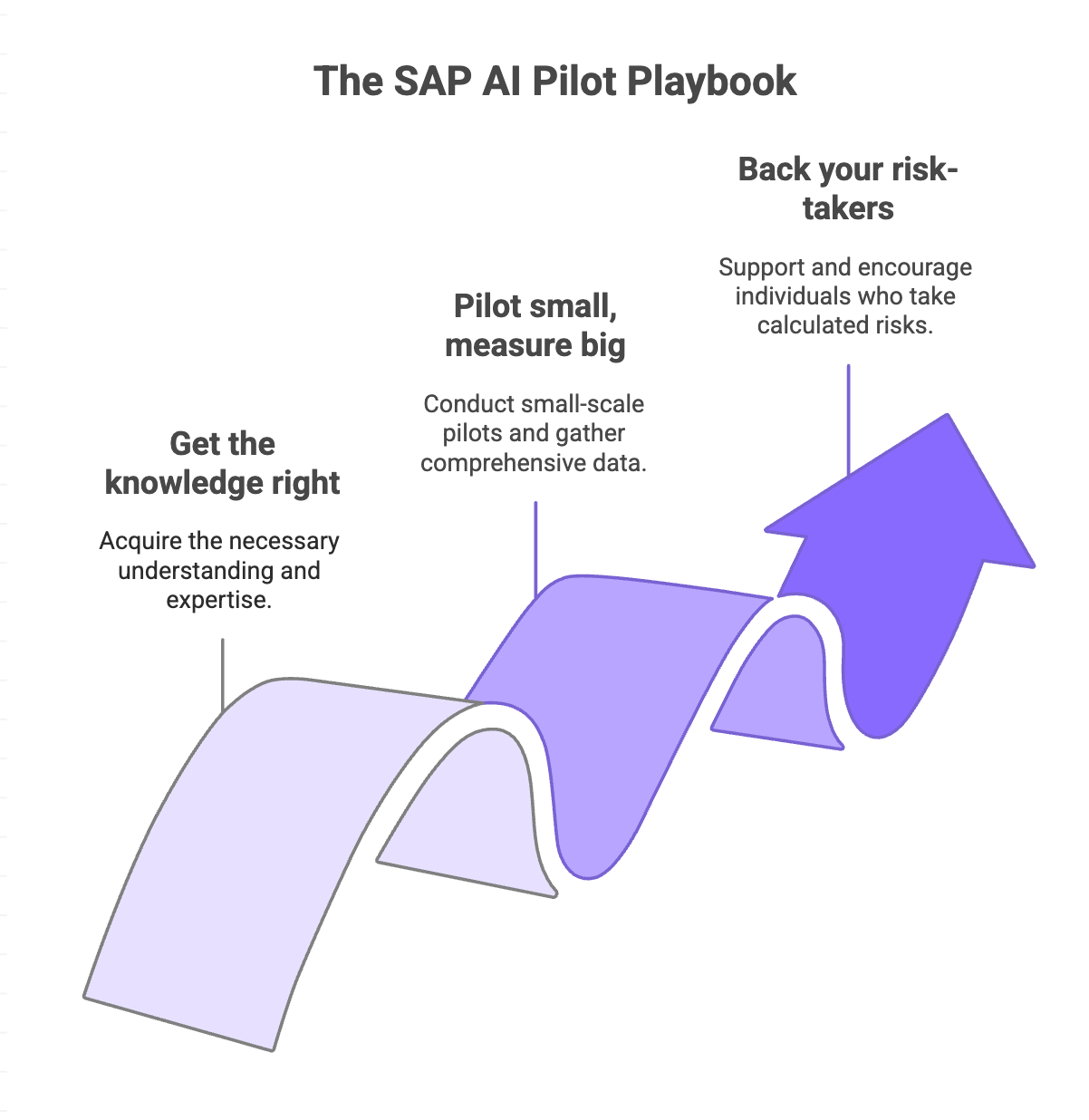

Step one: Get the knowledge right.

Before touching any generative AI tools, SAP invested heavily in the quality of its 10M+ knowledge assets — help docs, KB articles, community posts, and videos. As one exec put it: “If you’re serving dinner with poor ingredients, it doesn’t matter how great the chef is.”

Step two: Pilot small, measure big.

SAP’s Concur division used Coveo’s AI-powered search as a testing ground. The result: a 30% drop in support cases and €8M in annual cost avoidance — real ROI in under six months.

Step three: Back your risk-takers.

Leadership made one crucial cultural move: telling teams “You won’t be punished for trying.” That single statement unlocked faster iteration and bolder experimentation — the difference between another stalled pilot and a success story.

👉 Takeaway: AI success isn’t about deploying the flashiest tech. It’s about focus, foundations, and follow-through — picking real problems, cleaning the data behind them, and creating the safety for teams to experiment without fear.

🧪 Project Playground

Quick Recap for my new subscribers: Each week for the next several weeks, this project playground feature will follow the latest Agentic AI course syllabus from DeepLearning.AI, taught by Andrew Ng (an AI legend), recapping what I learn each week through a product marketing lens. We’ve covered Modules 1, 2, and 3 of five so far. In a few weeks, part two of this project will actually be building an agentic AI marketing workflow with me.

⚙️ Agentic AI Module 4: Practical Tips for Building Agentic AI

Most AI systems don’t fail because the model is weak — they fail because no one measures how well the workflow actually performs.

This module focused on evals and error analysis — the practical habits that turn agentic AI from a prototype into a reliable system.

🧪 Step 1: Build Small Evals That Keep You Honest

Before scaling, test your workflow with a mini eval — a set of 10–20 labeled examples.

If your agent extracts competitor launch dates from press releases, manually tags the correct dates, and compares them to the model’s output.

Each time you adjust prompts or algorithms, rerun the eval to see if performance improves.

💡 PMM translation: Treat it like a message test — start small, measure, iterate. Don’t rely on vibes.

🔍 Step 2: Trace Errors Like a Detective

When something breaks, trace the workflow step by step to find where the logic fell apart.

You’re looking at two levels of output:

Traces: the outputs of the full workflow

Spans: the output of a single step

Most bad outputs come from:

Weak search terms

Poor source selection

Faulty reasoning over text

💡 PMM translation: Just like a launch review — was the messaging off, the data wrong, or the analysis shallow? Find the root cause before you fix it.

🧭 Step 3: Let Error Analysis Drive Next Steps

Once you identify where performance breaks, use that data to prioritize improvements — better retrieval, clearer prompts, cleaner data.

💡 PMM translation: Think of your AI workflow like a GTM engine. Each iteration reveals where your signal weakens — and where to double down.

📋 Cheat Sheet: How Marketers Can Apply Module 4

Agentic AI Step | What It Means | PMM Parallel |

🧪 Build Evals | Test your workflow with 10–20 real examples to track accuracy | Like running a quick message test before launch |

🔍 Trace Errors | Review each step’s output to pinpoint what failed | Like diagnosing why a GTM campaign underperformed |

🧭 Iterate Intentionally | Use eval results to guide what to fix or optimize next | Like using campaign data to improve next quarter’s plan |

Key Takeaway:

Agentic systems don’t improve by luck — they improve by measurement, tracing, and iteration. The best builders think like the best marketers: they don’t just launch, they learn.

📣 AI Word(s) of the Week

Traces: the outputs of the full workflow

Spans: the output of a single step

Thanks for reading! Please note that there will not be an issue going out next week because I will be on vacation, but the following week I’ll be back in your inboxes.

As always, stay curious and have fun!

Best,

Skyler Neal